As my frequent readers know, I’be been experimenting with neural networks (type of artificial intelligence) over the past couple of weeks. More specifically, I’ve been using neural networks for image recognition. Have a look at the previous couple posts on this blog for a problem description.

In my continued attempts to ‘fine tune’ my neural network application, I noticed that the quality of the final outcome, that is, either a clear separation of males from females (‘good outcome’) or a complete mix (‘bad outcome’) is extremely dependent on the initial coefficients given to the program, so dependent that the difference between 0.00005 and 0.00003 is extreme:

Below a graph of 10000 iterations where the initial coeficients were slighly off:

As can be seen from the graph, with these coeffcients the program is absolutely incapable of differentiating between male and female images.

Tweking the coefficients with a few fractional steps, the separation is clear:

How come that such minimal differences in initial conditions can have such a huge impact…?

The ‘big picture’ answer: we are dealing with non-linear complex systems, stupid!

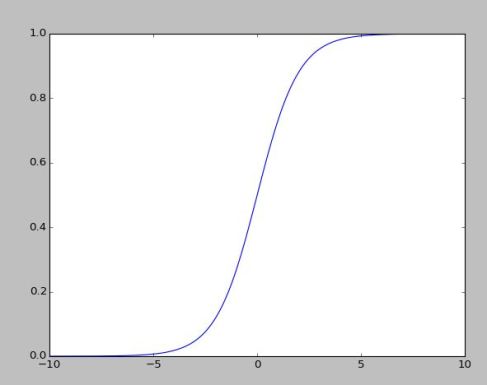

The slightly more detailed answer to that question resides in the filter function, the Sigmoid, whose job it is to map values from a large range, ideally [ -5 .. 5], but often much broader than so, to a range of [0..1], where 0 is a clear ‘woman’, 1 is a definite ‘man’.

Now, that doesn’t seem to be such a hard problem, does it…? No, if we are dealing with linear deterministic systems, where we *know* that the inputs to the Sigmoid will always be in the desired range, then it’s not a problem.

But now, we are not dealing with a deterministic linear systems, but a system where randomness and non-linearity are key ingredients in establishing the intial conditions:

My neural network has 100 input nodes, where each of these nodes accepts a single pixel from the 10 x 10 image. Each pixel value is in the range of [0..255]. There are 100 such pixels, and we want the sum of these pixels to fit the range [-5 .. 5], which is the active range of the Sigmoid filter (see image below). How do we get the sum of these random inputs, ranging from [0..255] to a range of [-5..5] ?

So, we multiply each pixel value with a random number in specific range so that the sum of all 100 pixels will reside in the desired range [ -5 ..5]. Getting this mapping correct is very sensitive, the coefficients need to be ‘exactly right’, an error of one part in 1000 might result in the system being worthless….

Then we repeat the same process for the 10 hidden nodes, to produce the single output value, which is also subjected to a Sigmoid filter.

Same sensitivity to precision in inputs goes here.

If you look at the image below of the Sigmoid function, you can see how extremely important it is to get the range right, i.e. [-5 .. 5]. Any values outside of this range will all produce results close to either 0 or 1, that is, values like -6,-7,-8… and 6,7,8… carry no classification capability what so ever.

We need our values to be in the [-5 to 5] range for a clear separation of targets.

So, the bottom line is that a tiny difference in initial values can make the system capable of target detection or completely useless. It takes some careful analysis by a superior brain (like mine:-) to come up with the ‘perfect’ initial coefficients for making the system to work, You simply will not find a solution by random ‘trial & error’.

Thus, since our world (the one we live in) is actually live and well (for the moment), it simply can not have been constructed from simple random events, the probability of randomness to come up ith coefficients that would enable (semi)intelligent life on earth is zero – there must have been a ‘creative designer’ with brain powers like mine selecting the initial coefficients…. 🙂

(yeah, right! 🙂

Now I believe… 😉

me 2.. 🙂